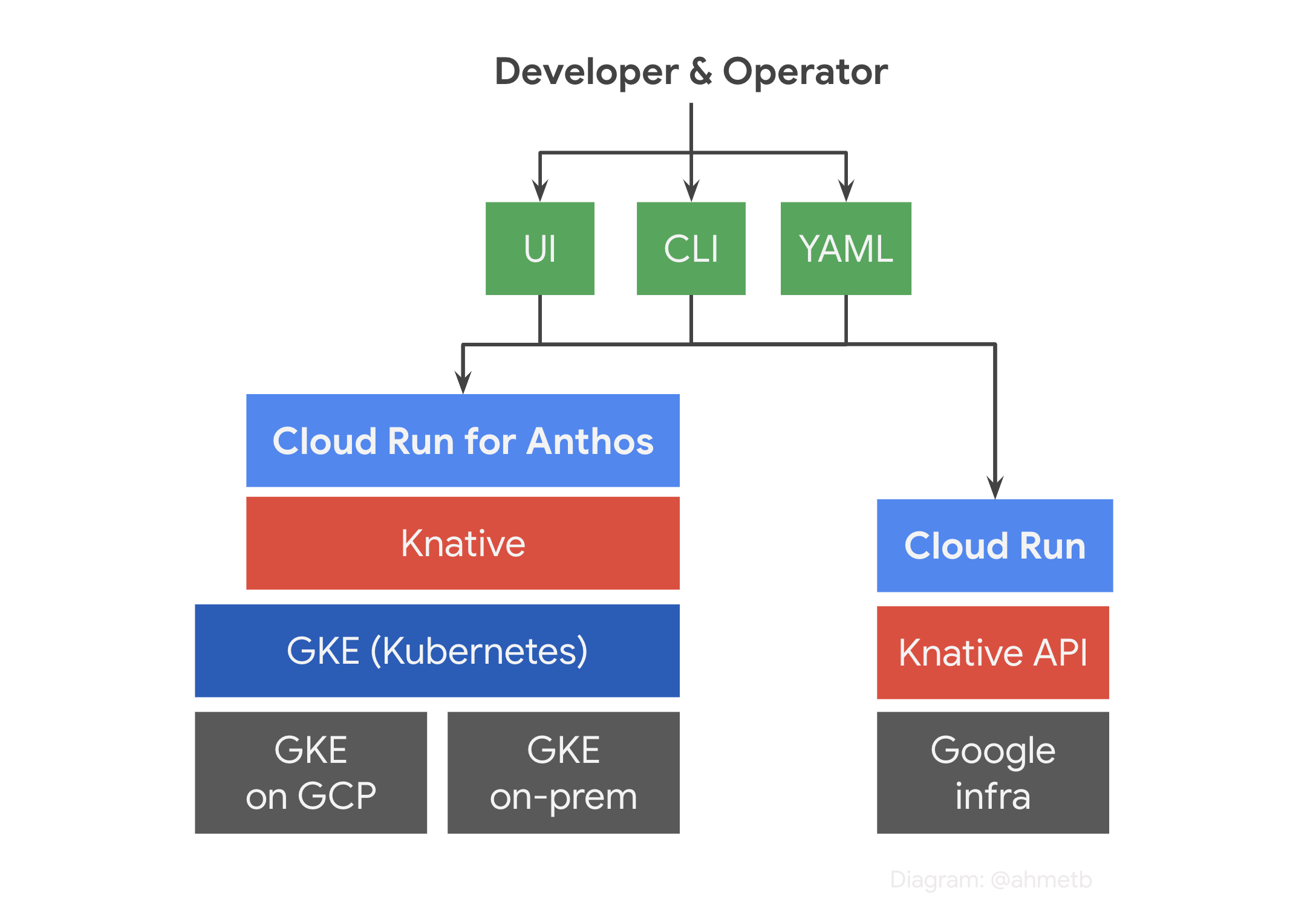

Google’s serverless containers as a service (CaaS) platform Cloud Run claims to implement the Knative API and its runtime contract. If true, this would mean that with the same YAML manifest file, you can run your apps on Google’s infrastructure, or a Kubernetes cluster anywhere.

Knative implements a lot of autoscaling and networking goodies directly on top of Kubernetes APIs. However, Google Cloud Run does not have Kubernetes, so it is actually a “reimplementation” of Knative APIs on Google’s infrastructure.

In this article, I’m going to walk you through parts of Knative API that work and that are not yet supported on Cloud Run.1

# Same format, same runtime, same API

Cloud Run adheres to the same deployment format as Knative and Kubernetes: It accepts OCI image (more commonly known as “docker image”) as the deployment format.

When the container is executed, it follows the Cloud Run container runtime contract, which is the same as Knative Serving runtime contract. For example, the containers will get the same set of environment variables, and the requests will get the same set of guaranteed headers both on Knative and on Google Cloud Run.

Furthermore, the Cloud Run REST API implements the Knative Serving API specification to extend the compatibility to the control plane as well. (I’ll review how much of it is fully implemented and what parts are not in the next article.)

# Use Knative API on Cloud Run today

Here’s a simple Knative Service manifest file you can directly deploy to Cloud Run:

apiVersion: serving.knative.dev/v1

kind: Service

metadata:

name: hello

annotations:

autoscaling.knative.dev/maxScale: "100"

spec:

template:

spec:

containerConcurrency: 50

containers:

- image: gcr.io/cloudrun/hello

resources:

limits:

cpu: "1"

memory: 512Mi

env:

- name: LOG_LEVEL

value: debug

$ gcloud alpha run services replace app.yaml --platform=managed

This example intentionally omits all the parts that don’t work on Cloud Run. 😼

However, just like kubectl apply, you can use this command to

deploy Knative Services to Cloud Run. Now, let’s talk about the parts I intentionally hid from you.

# Parts that do not work (yet)

Parts of Knative Serving API are not implemented on Cloud Run yet or forever. 1 These cases usually stem from one of these reasons:

- Knative API has leaky abstractions relying on Kubernetes primitives (which aren’t available on Cloud Run)

- some container knobs can’t be done with gVisor yet

- it’s waiting to be implemented

If you take a look at Knative

RevisionSpec

type on Cloud Run REST API reference and search for search not supported,

you’re going to find many API fields aren’t supported yet. 1

Unsupported fields

-

volumes & volume mounts: Knative currently does not allow mounting arbitrary volume types even on Kubernetes, so the options are limited:

-

configMap volume: Cloud Run currently doesn’t have the notion of a ConfigMap (use env instead)

-

secret volume: Cloud Run doesn’t have “Secret” objects, but Google now has a Secret Manager so you can expect it might be integrated at some point.

April 2021 update: Secret Manager support for Cloud Run is now in preview.

-

-

envFrom: this is actually a “RECOMMENDED” support in the spec, but due to lack of ConfigMap/Secrets, it’s not implemented. You need to specify env vars as literal values in the manifest.

-

liveness/readiness probes: in practice this could be done, however seems not implemented yet. There were also discussions in Knative to remove support for probes.

-

imagePullPolicy: Cloud Run has its efficient way of handling images, as it actually does not pull the image from registry when a new container starts.

-

securityContext: which UID to run the entrypoint as, probably can be implemented.

Knative features that aren’t part of the API Specification

Knative makes heavy use of Kubernetes annotations and labels to configure many aspects of a Service (autoscaling, visibility). These annotations are not defined in Knative API spec, so naturally, Cloud Run may treat the unimplemented ones as free-form key-value pairs2 and silently ignore.

-

autoscaling.knative.dev/minScaleannotation is not yet supported as Cloud Run always scales apps down to 0 when inactive for a while. However, there’s a validation to prevent this value from being specified right now.April 2021 update: Cloud Run now supports warm instances through this annotation. These instances stay around to reduce cold starts and charged at a lower price point.

-

autoscaling.knative.dev/maxScaleannotation is supported to set maximum number of container instances on Cloud Run. -

serving.knative.dev/visibilitylabel, which sets if a service is accessible externally or not, is not supported as all Cloud Run services currently have a public route (*.run.app) that are authorized (or allowed) using Cloud IAM permission bindings.

Parts of the API that work differently

- labels: Cloud Run API is not 100% compatible with Kubernetes label keys.

For example,

example.com/foois a valid label key in Kubernetes; but not allowed in Cloud Run API. Instead, it supports GCP resource labels format. - serviceAccountName: In Knative this refers to a Kubernetes Service Account. However, in Cloud Run, it’s a GCP service account email address, so they have different syntax.

- resource requests/limits: While requests/limits

help

you burst in a Kubernetes cluster, Cloud Run will provision the amount of

CPU/memory specified in

limitswith a gVisor sandbox, sorequestsdon’t really do anything. - cpu limits: Cloud Run currently supports only

1or2CPUs (subject to change) and it doesn’t support fractional core shares (e.g.500m)

# Conclusion

Cloud Run is still improving. If anything above prevents you from using it, you can always opt in to use Cloud Run for Anthos, which runs on a GKE cluster with the open source Knative Serving implementation.

Otherwise, I recommend moving to declarative deployments on Cloud Run with

gcloud run services replace (currently beta) or

Terraform and

automate your rollouts!

I hope you enjoyed this honest API review, I see no harm in discussing the parts that aren’t there so we can get your feedback and make it better. After all, my job is to make sure developers have a fun and productive time on Cloud Run. If you have comments, feel free to hit me up on Twitter.

-

That said Cloud Run for Anthos actually is Knative and runs on Kubernetes, so these limitations don’t apply to that. ↩︎ ↩︎ ↩︎

-

…which they are. Consider not using annotations for functionality that have reached v1 or “stable”, if you’re developing operators for Kubernetes. Follow Kubernetes’ example, and try restricting usage of annotations to alpha or beta features. ↩︎